Key Takeaways:

- LLMs work by learning patterns in language.

- LLMs are already driving real business use cases across functions.

- Enterprise value comes from applying them with the right data, integration, and governance.

Over the last couple of years, AI has quietly shifted from being an innovation topic to a business priority. What started with curiosity around chatbots and content generation is now a serious conversation in leadership rooms. Leaders are no longer asking what AI can do. They are asking where it fits, how fast they should move, and what impact it can realistically deliver.

At the center of this shift are large language models, the core of what we now see in generative AI applications across the enterprise. They are powering many of the tools teams are already using, but more importantly, they are beginning to influence how work gets done across the enterprise.

The opportunity is clear. The path, however, is not always.

What LLMs Actually Change in a Business Context

It is easy to find technical content where large language models are explained in depth. But for business leaders, the definition is far more practical.

In practice, LLMs change how teams deal with information day to day. Instead of spending hours going through documents or piecing together inputs from different sources, much of that work starts to happen faster and with less effort.

The difference becomes visible in routine operations. Reviews that once took significant manual effort begin to move more quickly. People spend less time tracking down information and more time using it. Even decisions that used to rely on scattered inputs start to feel more structured and consistent.

This is why generative AI with large language models is increasingly being seen not just as a capability, but as a driver of productivity and operational efficiency.

What Changes When AI Starts Delivering Value

When AI starts fitting into how teams already work, the change is usually quiet. There’s no big moment where everything suddenly looks different. It’s more than certain that things stop taking as long as they used to. Finance teams aren’t going back and forth on the same entries as often. Support teams don’t have to dig through multiple systems to understand a request. Operations teams start noticing issues a bit earlier than before.

None of this feels dramatic on its own. But over time, it changes the rhythm of how work gets done. Things move with less friction, and the overall flow becomes easier to manage.

Why Understanding Transformers Still Matters

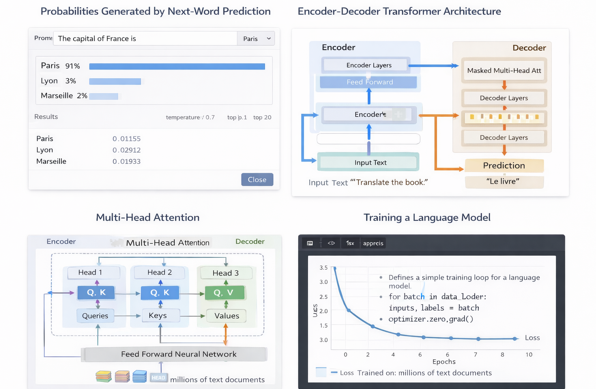

While business outcomes matter most, it helps to have a basic sense of what makes these systems work. Large language models are built on an architecture called Transformers. What makes Transformers powerful is their ability to understand relationships across an entire sentence or document, rather than processing information one step at a time.

At the core of this is something called attention. A simple way to think about it is this: instead of one perspective, the system evaluates language from multiple angles at once, and then combines those insights into a response. This is what allows modern AI systems to feel context-aware and coherent.

- Encoders, which focus on understanding input (used for things like classification and translation)

- Decoders, which focus on generating text (used in chat models like GPT)

From Understanding to Generating Outcomes

Transformers typically operate in two modes. Some are designed to understand input, used in tasks like classification, search, or analysis. Others are designed to generate output, used in conversational systems, copilots, and content generation.

Most enterprise AI applications today rely on generation models that continuously predict the next piece of information based on context. This is the foundation of how large language models work in practice, not as static systems, but as dynamic engines that respond based on patterns learned from data.

Why This Feels Like Intelligence

LLMs often feel intelligent because of three things working together. They are trained on massive datasets. They understand context through attention. And they learn patterns rather than following predefined rules.

But it is important to keep perspective. These systems do not truly understand information. They recognize patterns based on what they have seen before. Which is why, in enterprise environments, the quality of data and the way AI is applied matter just as much as the model itself.

What Exactly Happens When You Type a Prompt

When you send a prompt to an AI system, several steps happen behind the scenes.

Tokenization: Computers do not understand language directly. They convert text into tokens.

For example:

"I love learning AI" becomes ["I", "love", "learning", "AI"]Tokenization allows AI systems to process text efficiently.

Embeddings: Each token is then translated into numbers. In AI models, these numbers are called embeddings. Words with similar meanings tend to appear closer together in this space.

For example, “king” sits much closer to “queen” than it does to “apple.”

This is how language models grasp meaning. They are not memorizing dictionary definitions. Instead, they learn relationships between words based on how they appear together in real text.

Attention: Attention helps the model understand which words influence each other.

Consider the sentence: "The animal didn't cross the street because it was tired."

Attention allows the model to determine that "it" most likely refers to the animal rather than the street. This contextual awareness is what makes modern large language models in generative AI so effective.

A Simple Way to Think About Enterprise AI

In most cases, it begins with something simple figuring out what actually needs to improve. It could be cycle times that are dragging, data that isn’t reliable enough, or processes that just take more effort than they should. Once that becomes clear, the conversation usually shifts to data. Not in an abstract way, but in terms of how usable it really is. If the information isn’t consistent or easy to access, even the best AI tools don’t hold up for long.

And then there’s the question of where this all fits. AI tends to work better when it’s part of the systems people already use, rather than something separate they have to switch to. When it sits inside everyday tools whether that’s ERP, CRM, or other operational platforms, it starts to feel less like an add-on and more like part of the workflow. That’s when it becomes useful in a practical sense, especially in environments where context and reliability matter.

A Real-World Scenario: Finance Operations

Consider a typical financial close process.

In many organizations, it still depends heavily on manual checks. Teams review transactions, validate invoices, and scan contracts to ensure accuracy. The process works, but it is time-consuming and prone to delays. When AI is introduced into this workflow, the nature of the work begins to shift.

Instead of reviewing everything manually, teams focus on exceptions. Transactions that do not match expected patterns are flagged automatically. Documents are validated against system data in real time. Potential risks are identified earlier in the process. These are practical LLM use cases that show how AI begins to deliver value without disrupting existing processes.

A Brief Note on How the Technology Works

For those exploring learning AI for beginners, it helps to understand the basics without getting too technical. At a high level, LLMs are powered by an architecture called Transformers. This is often where how large language models work becomes relevant.

They allow AI systems to understand relationships between words and context, rather than processing information in isolation. This is what makes responses feel relevant and coherent. At the same time, these systems rely on patterns, not true understanding. That is why data quality, validation, and governance remain critical in enterprise environments.

The Role of Governance and Risk

For leadership teams, AI introduces a new set of considerations. Questions around data privacy, regulatory compliance, and decision reliability become more prominent. There is also a need to ensure that AI-driven outcomes remain aligned with business expectations.

Organizations that scale AI successfully do not treat these as afterthoughts. They build governance into the process early, creating the confidence needed to expand AI across functions.

Why Most AI Explanations Fall Short

Many AI initiatives fail not because the technology is lacking, but because the approach is fragmented. Some are too focused on experimentation without a path to scale. Others rely on disconnected tools that do not integrate with core systems. In many cases, the data foundation is not strong enough to support reliable outcomes. As a result, AI remains underutilized.

Bridging this gap requires a more structured approach—one that connects data, systems, and use cases in a way that aligns with how the business operates.

How Jade Global Helps

Understanding how LLMs work is useful, but most organizations quickly move to a more practical question: where can this actually help the business? The value of AI really shows up when it becomes part of everyday workflows rather than a standalone experiment. Jade Global works with companies to explore where AI might fit naturally into existing systems and processes. This usually starts with understanding how prepared the organization is for AI, identifying areas where automation or better insights could make a difference, and outlining a roadmap that connects those ideas with clear business outcomes.

From there, the focus shifts to making things work in the real world. Jade Global supports teams in building AI agents tailored to areas like finance, HR, supply chain, and customer operations. At the same time, we help organizations think through the guardrails needed around AI. That includes data privacy, regulatory expectations, and ongoing monitoring so the systems continue to behave the way the business expects them to.

Conclusion

For enterprises, the window to adopt AI effectively is now. Understanding the technology, identifying the right LLM use cases, and building governance around it takes time. Organizations that start early will gain a significant advantage. Learning the fundamentals of large language models in generative AI is the first step. Operationalizing them strategically and securely is where true transformation happens. That is exactly where Jade Global helps organizations move forward.

Talk to our AI specialists and discover how to turn LLM capabilities into real enterprise impact.