Key Takeaways:

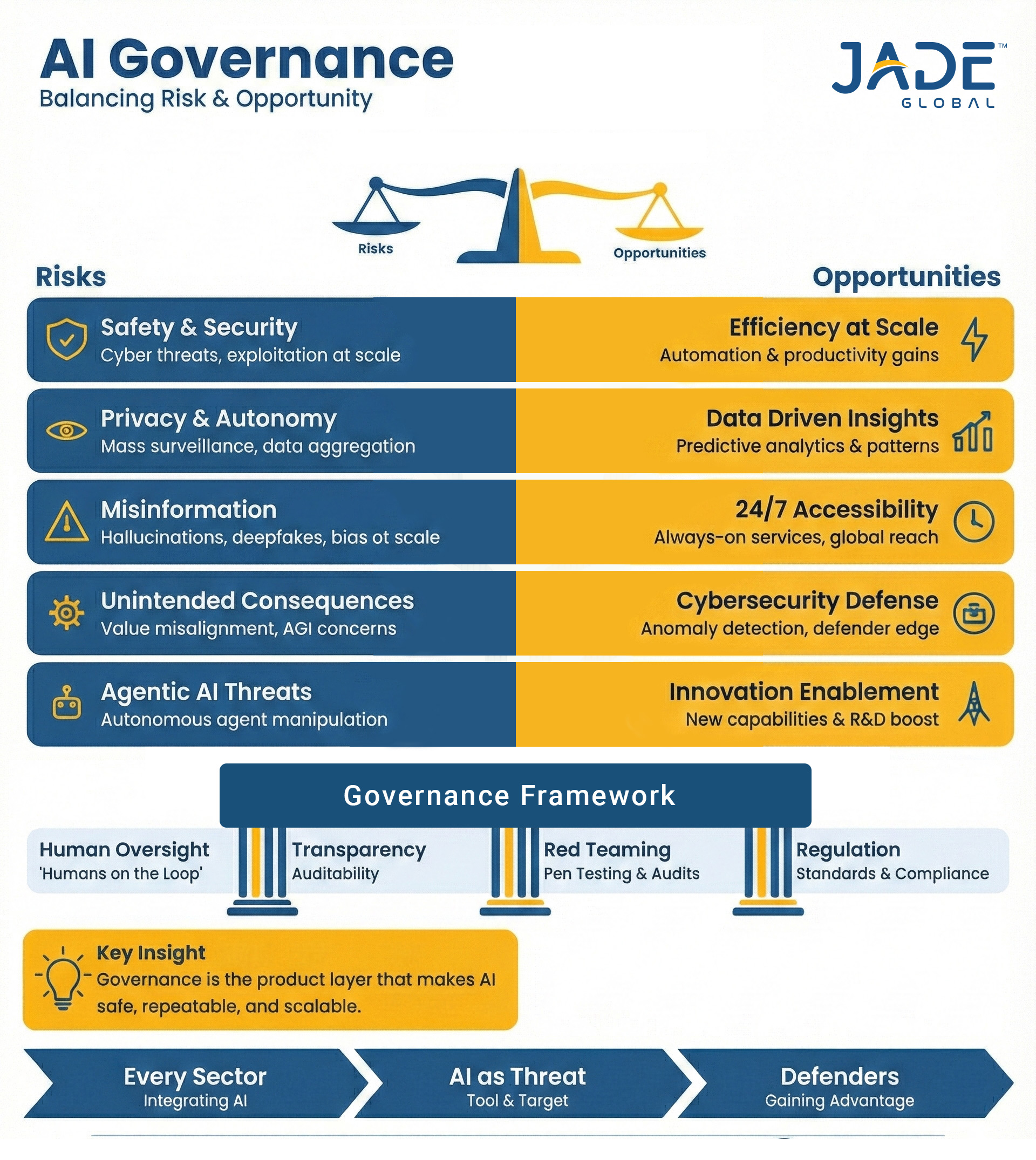

- Most AI failures are governance failures, not technology failures.

- AI in regulatory compliance needs oversight from day one.

- Enterprise AI governance enables scale without regulatory shock.

A few months ago, a federal court allowed a nationwide class-action lawsuit to proceed against a major HR technology vendor. The allegation? Its AI-powered hiring tool had been systematically screening out qualified applicants based on age.

Around the same time, Italy fined a leading AI company €15 million for processing personal data without adequate safeguards during model training. In the U.S. healthcare system, a class-action lawsuit continues against a large insurer, alleging that its AI algorithm denied coverage to elderly patients with a reported 90% error rate when decisions were reviewed by humans.

These are not hypothetical failures of Artificial Intelligence (AI). They are failures of enterprise AI governance. Not one of these incidents stemmed from poorly engineered models. Each one was a breakdown in oversight, accountability, and regulatory compliance.

And in 2025 and 2026, the cost of weak AI governance is no longer theoretical.

The Uncomfortable Truth About Enterprise AI Governance

Across industries, most organizations still treat AI governance in enterprises as an afterthought. They invest millions in AI development, automation, and generative AI capabilities, but very little in governance strategy and regulatory alignment.

By the end of 2025, a surprising number of enterprise AI initiatives had either lost momentum or disappeared altogether. The irony? The technology itself wasn’t the problem. Most of the models did what they were built to do.

What tripped organizations up were the surrounding gaps. The data feeding those systems wasn’t always reliable. Risk conversations happened late, if at all. Compliance teams were brought in after deployment, rather than before. And when tough questions surfaced, ownership was often unclear.

Regulators aren’t waiting. The EU AI Act is already being enforced, and several U.S. states are rolling out their own AI rules. Industries like healthcare and financial services are under closer watch than ever. Without enterprise AI governance, AI in regulatory compliance can create more risk than value. What starts as a small oversight can grow into a compliance issue and, before long, a trust issue.

That’s usually how enterprise AI governance fails. Not because leaders dismiss risk, but because governance is layered on after the fact instead of being built into the strategy from the beginning.

What Is AI Governance in Enterprises?

Many leaders assume governance slows progress. It doesn’t. In fact, AI governance in enterprises enables innovation to scale without creating avoidable risk.

At a practical level, AI governance in enterprises is about deciding upfront how AI will be built, used, and overseen; and who answers for it when something goes wrong. Strong AI governance best practices focus less on paperwork and more on clarity: clear ownership, thoughtful data choices, and decisions that can stand up to regulatory scrutiny.

Whether the AI system is developed in-house, applied in healthcare, used in regulatory compliance workflows, or sourced from a third-party vendor, the responsibility doesn’t shift. If your organization defines the use case and controls how the model is applied, the accountability sits with you.

AI in Regulatory Compliance: From Risk to Advantage

AI is showing up in places like fraud reviews, claims processing, credit decisions, and hiring audits. For many compliance teams, it’s already part of the daily workflow.

The concern isn’t whether to use AI in regulatory compliance. It’s how to use it without adding new exposure.

In practice, AI for regulatory compliance can take pressure off teams by handling repetitive documentation, surfacing unusual patterns earlier, and helping explain how certain decisions were reached. But if those systems aren’t properly overseen, they can introduce the high risks they were meant to reduce.

AI Governance in Healthcare: A High-Risk Environment

Healthcare is among the few industries that make governance risk visible. When AI touches clinical decisions or coverage approvals, the margin for error shrinks quickly.

AI governance in healthcare has to account for:

- Patient data privacy

- Transparency in care and coverage decisions

- Bias across age, gender, and other demographic groups

- Regulatory oversight under HIPAA, GDPR, and emerging AI laws

These systems don’t just optimize workflows. They influence diagnoses, treatment pathways, and whether a patient receives coverage. That’s why risk calibration matters.

For higher-impact use cases, organizations typically rely on:

- Human-in-the-loop oversight

- Formal impact assessments

- Ongoing fairness reviews

- Clear documentation explaining how decisions are made

Lower-risk tools, such as internal support bots, don’t demand the same intensity of controls. The key is proportionality. Governance should match the level of risk not become a blanket layer of bureaucracy applied to everything.

The Regulatory Wave Is Already Here

If you are wondering how to prepare for AI regulation, well, the time is now. The EU AI Act is fully underway:

- High-risk AI compliance requirements activate in 2026

- Fines reach €35 million or 7% of global turnover

- Extraterritorial applicability affects global enterprises

In the United States, over 1,100 AI-related bills were introduced in 2025 alone. States like Texas, Colorado, and California have already enacted AI disclosure, bias prevention, and risk management requirements.

Boards are now facing fiduciary liability for AI failures. This is why an AI governance strategy and framework are no longer optional; they are board-level priorities.

AI Governance Best Practices That Actually Work

When people ask what the best practices in AI governance actually look like, the answer is usually less dramatic than expected. The companies that manage this well tend to focus on a few practical disciplines, and they stick to them.

Know what’s in play

Enterprise AI governance begins with a simple step: understand which AI systems are actually influencing decisions today. In many companies, the list is longer than expected.

Match oversight to impact

Not every model deserves the same level of scrutiny. Sound AI governance best practices adjust controls based on real-world consequences.

Assign clear accountability

Every system should have:

- A business owner

- A technical lead

- A data steward

- Executive oversight

Without defined ownership, enterprise AI governance breaks down when decisions are questioned.

Build controls into the development process

Reviews around data quality, bias, documentation, and explainability should happen while systems are being built not after they go live.

Stay involved after launch

Models change as data and behavior change. Ongoing monitoring is part of responsible enterprise AI governance, not an optional extra.

None of this is theoretical. It’s the operational backbone of mature enterprise AI governance frameworks, the difference between AI that scales and AI that stalls.

The Competitive Advantage of Getting Governance Right

Enterprise AI governance is often discussed in the context of risk. But the real benefit shows up in execution. When governance is built in early, projects don’t stall late in the cycle. Regulatory conversations are more straightforward. Customers aren’t left questioning automated decisions.

- Fewer last-minute escalations

- Greater confidence in AI-driven outcomes

- More predictable regulatory interactions

- Credibility that compounds over time

Done well, enterprise AI governance creates operating discipline and discipline scales.

How Jade Global Helps Enterprises Build Responsible AI

At Jade Global, we focus on making enterprise AI governance workable inside real operating environments. Across healthcare, manufacturing, financial services, and high-tech, we help organizations:

- Assess AI readiness and underlying data maturity

- Align AI initiatives with existing governance and compliance structures

- Interpret requirements under the EU AI Act and evolving U.S. AI laws

- Design governance models that reflect actual risk exposure

- Put monitoring in place, so AI systems remain accountable after deployment

With more than two decades of enterprise transformation experience, we have seen the difference early governance makes. Enterprise AI governance is not a formality. It is infrastructure. And infrastructure built early is far easier to scale than infrastructure rebuilt under pressure.

Ready to strengthen your enterprise AI governance before risk forces your hand? Let’s start the conversation.

FAQs:

Q1: What is enterprise AI governance?

Enterprise AI governance is the set of decisions and guardrails that shape how AI is introduced and managed inside a company. It clarifies who owns the system, how data is used, and how decisions can be explained when questioned. Without it, AI moves faster than accountability.

Q2:Why do most AI initiatives fail due to governance gaps?

In many cases, the model works. The breakdown happens around it. Enterprise AI governance is brought in too late, after systems are already live. Data issues surface, ownership is unclear, and controls around AI in regulatory compliance weren’t defined upfront. That’s when projects slow down or get pulled back entirely.

Q3:How does the EU AI Act impact global enterprises?

The EU AI Act applies to any company whose AI systems affect EU residents, even if the company is based elsewhere. High-risk AI systems must meet strict compliance standards, and penalties can reach €35 million or 7% of global turnover. This makes a clear AI governance strategy and framework essential for global enterprises.

Q4:Why should CIOs prioritize in AI governance?

CIOs are accountable for how AI is deployed across systems, data platforms, and cloud environments. Without enterprise AI governance, AI in regulatory compliance, healthcare, and financial services can expose the organization to legal and reputational risk. Governance protects both innovation and enterprise stability.

Q5:How does Jade Global help enterprises implement AI governance?

Jade Global helps organizations operationalize enterprise AI governance through AI readiness assessments, data governance alignment, regulatory mapping under the EU AI Act and U.S. AI laws, risk-calibrated governance design, and continuous monitoring. The focus is practical execution turning AI governance best practices into working operating models.